by H. Sterling Burnett, Ar 23, 2025 in ClimateChangeDispatch

Wisconsin Public Radio (WPR) ran a story blaming the unusual number of wildfires in the state in January and February on climate change. This is wrong. One year’s early start to the wildfire season can’t be blamed on climate change. [emphasis, links added]

Only a long-term trend of increasing or increasingly early wildfires would suggest climate change as a factor in this year’s fires, but no such trend exists.

A buildup of vegetation due to improved rainfall conditions in previous years, human populations expanding into the urban/forest interface, and more human-sparked fires from carelessness and arson, is the cause unusual number of wildfires starting off the year in 2025.

The WPR story, “Wisconsin sees record start to the fire season as climate change drives more blazes,” which is long on speculation but short on hard data and evidence, says:

“Wisconsin saw a record number of fires in January and February this year due to a lack of snow as climate change has set the stage for more wildfires,” says Danielle Kaeding, WPR’s environment and energy reporter for Northern Wisconsin. “Wisconsin averages 864 wildfires that burn around 1,800 acres each year, according to the state Department of Natural Resources.

“The state had already seen more than 470 fires as of Monday, double the average for this time of year. More than 1,900 acres have already been set ablaze,” Kaeding continues.

Kaeding interviewed Jim Bernier, the Wisconsin Department of Natural Resources (WDNR) forest fire section manager, about the fires, which he blamed on two years of drought caused by climate change.

“With these droughty conditions that we’re experiencing, we’re seeing these fire-staffing needs occurring more and more all year round,” Bernier said. “We’ve never had this many fires in January and February ever in the state of Wisconsin,”

Bernier’s claim is belied by the fact that Wisconsin is not in drought, and especially not an unusually severe drought.

Data from the U.S. National Integrated Drought Information System (NIDIS) shows that in January through March of 2025, precipitation was nearly an inch above normal, with 2025 being the 35th wettest year [since] 1895. At present, no counties in Wisconsin are designated as being under Drought Disaster conditions.

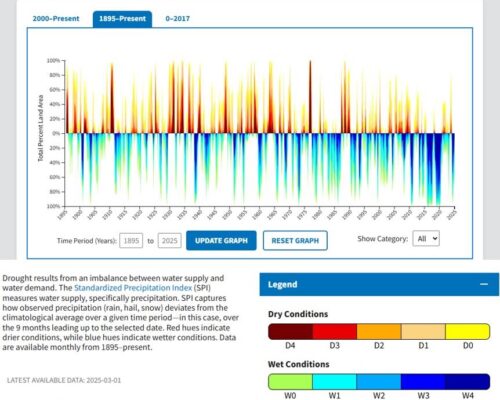

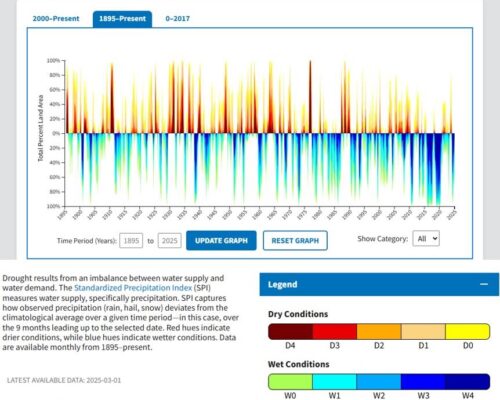

Long-term drought data for Wisconsin show that over the past 30 years, drought conditions have been less severe than historically common, with the last decade being particularly wet in general. (See the graph from NIDIS, below.)

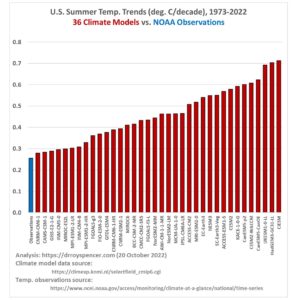

The fact that Wisconsin has not suffered unusual degrees of drought or extremely hot temperatures in recent years is confirmed in the National Oceanic and Atmospheric Administration’s Wisconsin State Climate Summary, which reports that the number of very hot days in Wisconsin has declined sharply over the past century, while the amount of winter and summer precipitation has either slightly increased or remained about the same.

…