by S. McIntyre, Aug 26, 2021 in ClimateAudit

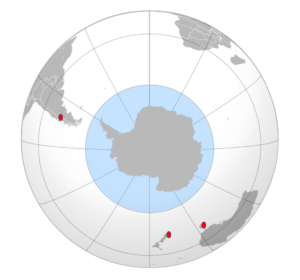

The 30-60N latitude band gets lots of attention in paleoclimate collections – probably more proxies than the rest of the world combined. The 30-60S latitude band is exactly the same size, but it is little studied. It is the world of the Roaring Forties and Furious Fifties, a world that is almost entirely ocean. The only land is New Zealand, Tasmania and the southern coast of Australia facing Antarctica, the tip of South Africa and the narrow part of South America: southern Chile and Argentina. But 96% or so is ocean.

…

…

Conclusion

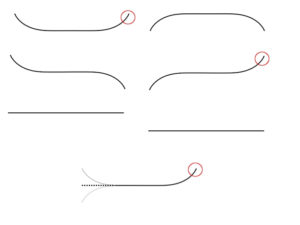

Given that the 60-30S latband is almost entirely ocean, it seems logical that IPCC and PAGES2K should use data from ocean proxies to estimate past temperature in this latitude band. But this isn’t what they’ve done. Instead, they’ve purported to estimate past temperature from a few scattered tree ring chronologies, only one of which reaches earlier than AD1850; and an idiosyncratic singleton pigment series. Ironically, the only 30-60S proxy series in PAGES 2019 that reaches back into the first millennium – the Mount Read, Tasmania tree ring series – was used by Mann et al 1998-1999, Jones et al 1998 and numerous other supposedly “independent” multiproxy studies. Neither of the two series reaching back to the medieval period permit the conclusion that modern period is warmer than medieval period. Caveat: I’m not saying that it isn’t; only that this data doesn’t show it, let alone support the big-bladed HS cited by IPCC. High-resolution alkenone measurements from ocean cores offshore Chile show a consistent decrease in ocean temperatures over the past two millennia that is neither reported nor discussed by IPCC (or PAGES 2019).

To be clear, some of the technical articles on 30-60S ocean core proxies by specialist authors are truly excellent and far more magisterial than the IPCC mustered, in particular, several articles on offshore Chile. Here are a few:

Mohtadi et al, 2007. Cooling of the southern high latitudes during the Medieval Period

and its effect on ENSO linkKillian and Lamy 2012. A review of Glacial and Holocene paleoclimate records from southernmost Patagonia (49-55degS) link

Collins et al 2019. Centennial‐Scale SE Pacific Sea Surface Temperature Variability Over the Past 2,300 Years link