by F. Menton, Oct 28, 2021 in WUWT

“The climate is changing, and we are the cause.” That is a statement that is so often-repeated and affirmed that it goes way beyond mere conventional wisdom. Probably, you encounter some version or another of that statement multiple times per week; maybe dozens of times. Everybody knows that it is true! And to express disagreement with that statement, probably more so than with any other element of current progressive orthodoxy, is a sure way to get yourself labeled a “science denier,” fired from an academic job, or even banished from the internet.

The UN IPCC’s recent Sixth Assessment Report on the climate is chock full of one version after another of the iconic statement, in each instance of course emphasizing that the human-caused climate changes are deleterious and even catastrophic. Examples:

- Human influence has likely increased the chance of compound extreme events since the 1950s. This includes increases in the frequency of concurrent heatwaves and droughts on the global scale (high confidence); fire weather in some regions of all inhabited continents (medium confidence); and compound flooding in some locations (medium confidence). (Page A.3.5)

- Event attribution studies and physical understanding indicate that human-induced climate change increases heavy precipitation associated with tropical cyclones (high confidence) but data limitations inhibit clear detection of past trends on the global scale. (Page A.3.4, Box TS.10)

- Some recent hot extreme events would have been extremely unlikely to occur without human influence on the climate system. (Page A.3.4, Box TX.10)

So, over and over, it’s that we have “high confidence” that human influence is the cause, or that events would have been “extremely unlikely” without human influence. But how, really, do we know that? What is the proof?

This seems to me to be rather an important question. After all, various world leaders are proposing to spend some tens or hundreds of trillions of dollars to undo what are viewed as the most important human influences on the climate (use of fossil fuels). Billions of people are to be kept in, or cast into, energy poverty to appease the climate change gods. Political leaders from every country in the world are about to convene in Scotland to agree to a set of mandates that will transform most everyone’s life. You would think that nobody would even start down this road without definitive proof that we know the cause of the problem and that the proposed solutions are sure to work.

If you address my question — what is the proof? — to the UN, they seem at first glance to have an answer. Their answer is “detection and attribution studies.” These are “scientific” papers that purport to look at evidence and come to the conclusion that the events under examination, whether temperature rise, hurricanes, tornadoes, heat waves, or whatever, have been determined to be “attributed” to human influences. But the reason I put the word “scientific” in quotes is that just because a particular paper appears in a “scientific” journal does not mean that it has followed the scientific method.

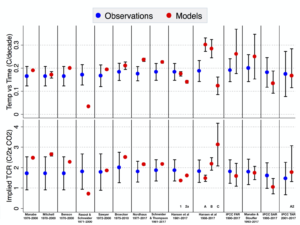

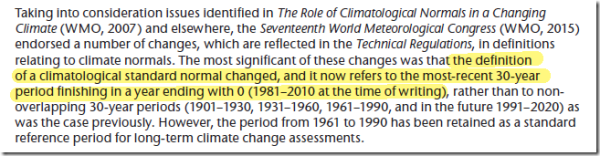

The UN IPCC’s latest report, known as “Assessment Report 6” or “AR6,” came out in early August, loaded up, as already noted, with one statement after another about “high confidence” in attribution of climate changes and disasters to human influences. In the couple of months since, a few statisticians who actually know what they are doing have responded. On August 10, right on the heels of the IPCC, Ross McKitrick — an economist and statistician at the University of Guelph in Canada — came out with a paper in Climate Dynamics titled “Checking for model consistency in optimal fingerprinting: a comment.” On October 22, the Global Warming Policy Foundation then published two Reports on the same topic, the first by McKitrick titled “Suboptimal Fingerprinting?”, and the second by statistician William Briggs titled “How the IPCC Sees What Isn’t There.” (Full disclosure: I am on the Board of the American affiliate of the GWPF.).

…

…